Summary of Reinforcement Learning Frameworks

There are many reinforcement learning frameworks out there, but since relatively few people use them, documentation tends to be lacking.

In this article, I have put together a rough summary of the pros and cons of each framework.

I hope this serves as a helpful reference when choosing a framework.

What Is a Reinforcement Learning Framework?

There is no strict definition, but here we consider something a reinforcement learning framework if it satisfies at least one of the following:

- Pre-implemented RL agent algorithms (e.g., TRPO, A3C, ...) are ready to use

- Training progress can be easily visualized during learning (e.g., Tensorboard support)

- You can add custom environments or agents for training

- Training code is provided in an abstracted form so you don't have to implement the details yourself

In short, it is a library that makes at least one of the following easier: model and training implementation, training execution, or visualization.

Caveats

While convenient...

Once you hit a bug, it can be very hard to recover.

- Since the user base is small, documentation tends to be scarce

- The framework implementations themselves tend to be complex

These are notable drawbacks. For example, the OpenAI Spinning Up Document states:

RL libraries frequently make choices for abstraction that are good for code reuse between algorithms, but which are unnecessary if you're only writing a single algorithm or supporting a single use case.

The more feature-rich and abstract a framework becomes, the more complex its internal implementation gets, so it is recommended to consider:

- Do you actually need a framework?

- How much do you want the framework to handle for you?

when choosing a framework.

Viable Frameworks

RL Coach

A framework made by Intel that supports Tensorflow and MXnet.

Pros

-

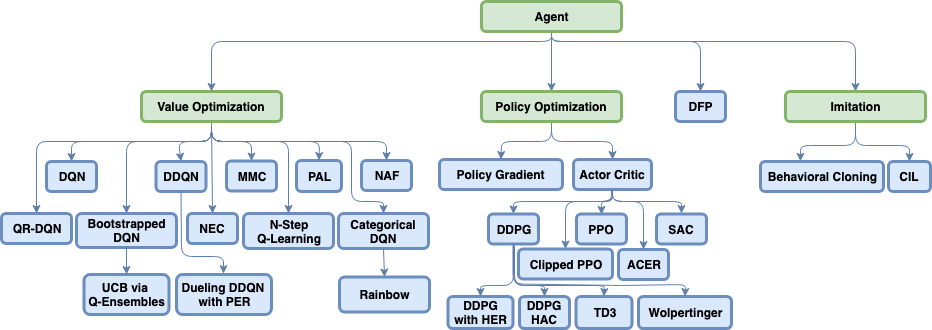

A very large number of algorithms are already implemented

-

Supports many training environments, including OpenAI Gym (https://github.com/NervanaSystems/coach#supported-environments)

-

A preset feature allows you to create files containing only hyperparameters to pass to the model By writing multiple presets, you can try different hyperparameters without modifying the code

-

You can display agent behavior in real time during training (https://nervanasystems.github.io/coach/usage.html#rendering-the-environment))

-

The dashboard feature enables detailed visualization of training results

- In multi-agent training, you can display all agents' training results on a single graph

- When running multiple experiments with the same algorithm, it can automatically display a graph showing the average of those learning curves -> This is useful for evaluating model performance when training is unstable

Cons

- The overall implementation is complex, making it difficult to add your own agents

- Distributing agents is harder than with RLlib (described below), and requires AWS S3

- The aforementioned dashboard is not user-friendly

RLlib

A framework that supports all deep learning frameworks and excels at agent distribution. Implementation of agents is somewhat easier with Tensorflow or PyTorch.

Pros

-

You can set up multi-agent training just by implementing individual workers

- The Trainer handles the "worker trains -> parameter update" process in multi-agent training

- Multi-agent training is possible even with deep learning frameworks that don't natively support it

-

Automatic hyperparameter tuning using distributed computing (tune library)

- State-of-the-art hyperparameter tuning algorithms such as Population Based Training are also available

-

Training environments can be added by writing them in OpenAI Gym format

-

Tensorboard can be used for visualization

Cons

- The internal implementation is complex, making it difficult to add your own agents

- You need to learn the unique distributed processing notation of the Ray library used internally

dopamine

A reinforcement learning framework from Google (though not an official Google project).

Pros

- Records detailed logs during training (learning curves, replay buffers, model parameters) in a memory-efficient manner using gin

- Replay videos of agent behavior can be easily created

Cons

- Only 4 agent algorithms are implemented (DQN, Rainbow, C51, IQN)

- Insufficient documentation

- The only supported environment is Atari, and since there is no documentation on how to add environments, adding your own is quite laborious

- There are only 4 contributors, and the majority of the code was written by one of them, which raises concerns about the project's future

(Update: There have been no commits since December of last year.)

Stable Baseline

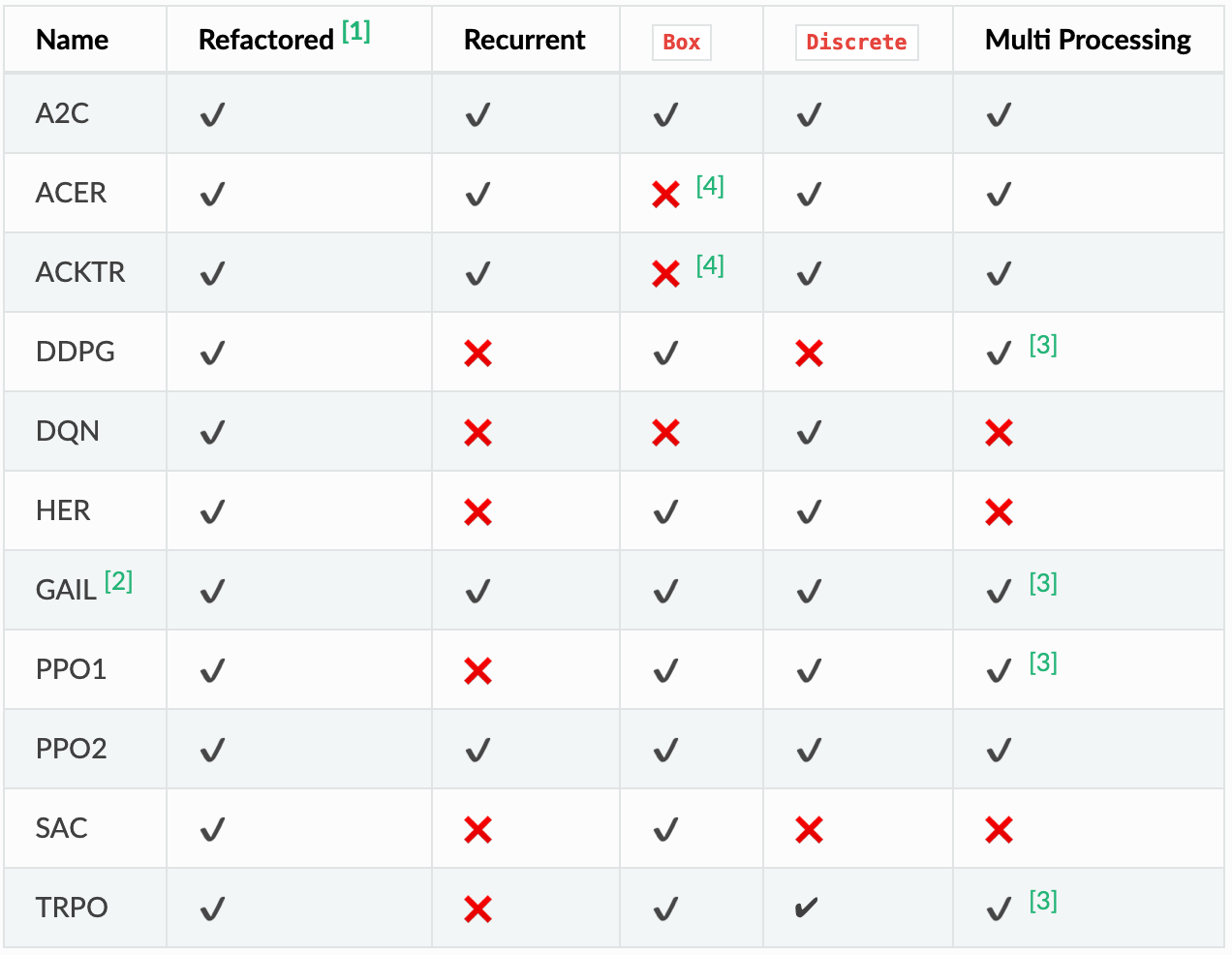

A fork of OpenAI Baselines that supports Tensorflow. More of a collection of model implementations than a framework.

Pros

- The API is modeled after scikit-learn, making it easy to use

- Basic models are already implemented

- The agent algorithm implementations are well-commented and easy to understand, making them easy to modify

- Multi-process execution is also supported

Cons

- Only environments supported by OpenAI Gym are supported, so you need to write your own wrapper for other environments

- You need to write code to run experiments

Frameworks That Seem Less Useful

Tensorforce

A framework for Tensorflow, as the name suggests.

Pros

Properly modularized, making it easy to study and write code.

Cons

- Not many agent algorithms are implemented, so you need to write your own

- The volume and depth of documentation is somewhat lacking

Keras-RL

A framework for Keras, as the name suggests.

Pros

There is so little documentation that it is not even clear what the advantages are.

Cons

- The documentation is unbelievably sparse. Truly sparse. (https://keras-rl.readthedocs.io/en/latest/core))

- Not many agent algorithms are implemented, so you need to write your own