Gradient Descent

Let's minimize using gradient descent.

from typing import TypeVar

import numpy as np

from japanize_matplotlib import japanize

import matplotlib.pyplot as plt

from matplotlib.figure import Figure

japanize()

Number = TypeVar("Number", np.ndarray, float)

# Function to minimize: f(x) = x^2

def f(x: Number) -> Number:

return x**2

# Gradient (derivative) of f(x): df/dx = 2x

def gradient(x: Number) -> Number:

return 2 * x

Defining the Plot Function

def plot_steps(current_x: float, history_x: list[float], current_step: int) -> Figure:

"""

Plot f(x) and the trajectory of gradient descent.

Args:

current_x: The current x position in gradient descent.

history_x: A list of the x history so far.

current_step: The current step number.

Returns:

Figure: The plot figure object.

"""

# Create data for plotting f(x)

x_curve = np.linspace(-5, 5, 100)

y_curve = f(x_curve)

# Create data for the computed points so far

history_y = f(np.array(history_x))

# Create the plot

fig, ax = plt.subplots(figsize=(10, 6))

ax.plot(x_curve, y_curve, "b-", label="f(x) = x^2")

ax.plot(history_x, history_y, "ro--", label="Gradient descent trajectory", markersize=8)

ax.plot(current_x, f(current_x), "g*", markersize=15, label="Current position")

# Add labels and title

ax.set_title(f"Gradient Descent: Step {current_step}")

ax.set_xlabel("x")

ax.set_ylabel("f(x)")

ax.legend()

ax.grid(True)

return fig

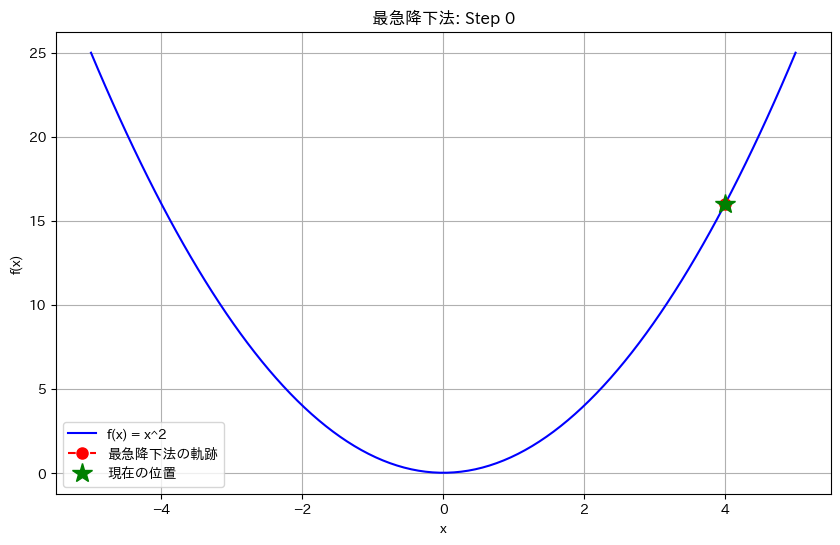

Parameter Settings

- Learning rate:

learning_rate = 0.1 - Initial value:

initial_x = 4.0 - Number of steps:

total_steps = 3

The learning rate determines how large each update to x will be.

learning_rate = 0.1

history_x = [current_x := 4.0]

total_steps = 3

plt.show(plot_steps(current_x, history_x, 0))

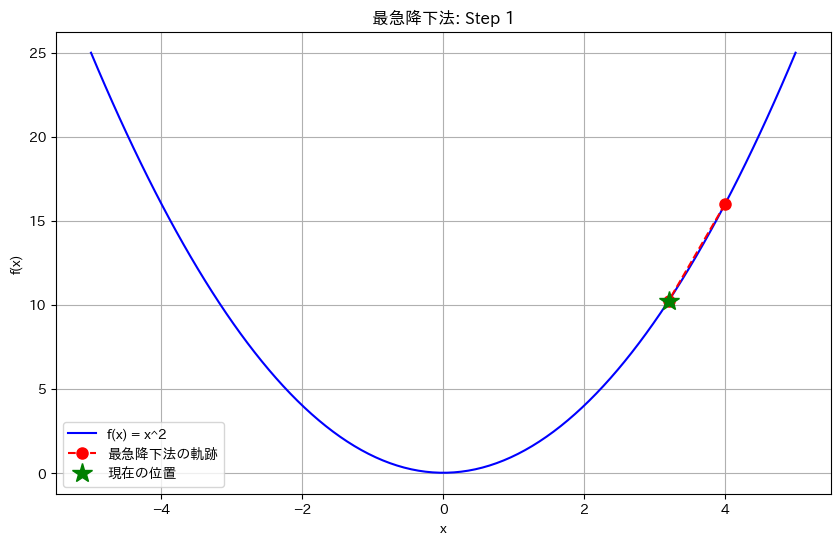

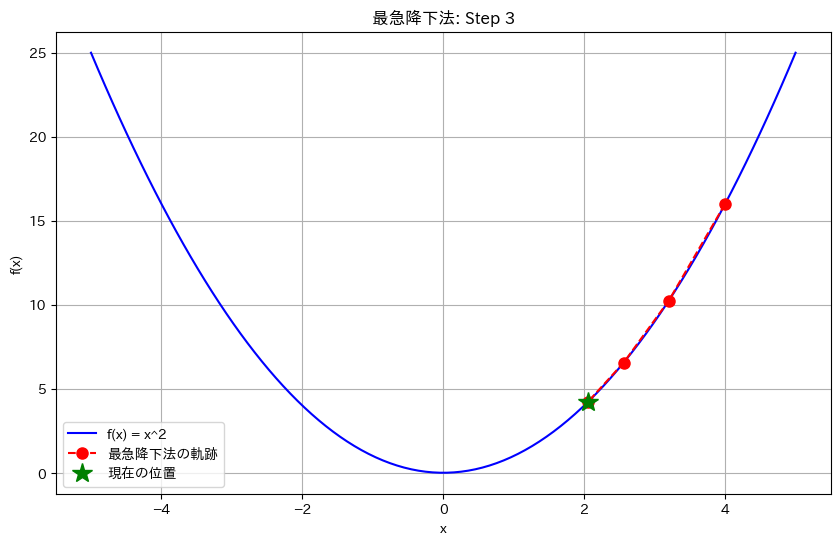

Running Gradient Descent

for i in range(total_steps):

grad = gradient(current_x)

# Gradient descent update step

# x_new = x_old - learning_rate * grad

prev_x = current_x

current_x = current_x - learning_rate * grad

history_x.append(current_x)

step_num = i + 1

print(f"\nStep {step_num}:")

print(f" Current position: x = {prev_x:.4f}")

print(f" Gradient ≈ {grad:.4f}")

print(f" New position: x = {current_x:.4f}")

print(f" Current function value: f(x) = {f(current_x):.4f}")

fig = plot_steps(current_x, history_x, step_num)

plt.show(fig)

Step 1: Current position: x = 4.0000 Gradient ≈ 8.0000 New position: x = 3.2000 Current function value: f(x) = 10.2400

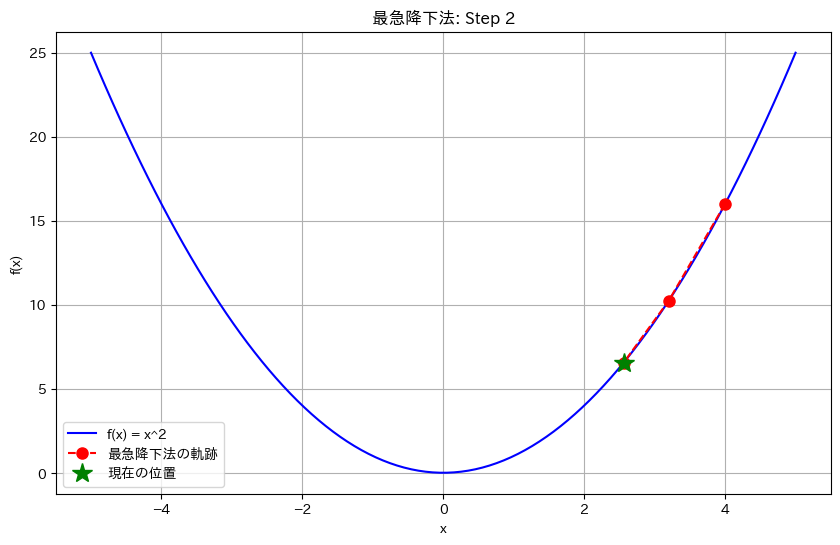

Step 2: Current position: x = 3.2000 Gradient ≈ 6.4000 New position: x = 2.5600 Current function value: f(x) = 6.5536

Step 3: Current position: x = 2.5600 Gradient ≈ 5.1200 New position: x = 2.0480 Current function value: f(x) = 4.1943

Bonus: Gradient Descent with More Steps

steps = 15

history_x = [current_x := 4.0]

history_figs = []

for i in range(steps):

grad = gradient(current_x)

current_x = current_x - learning_rate * grad

history_x.append(current_x)

history_figs.append(plot_steps(current_x, history_x, i + 1))

import imageio # noqa

with imageio.get_writer(

"gradient_descent.gif", mode="I", duration=0.5, loop=0

) as writer:

for fig in history_figs:

fig.savefig("temp.png")

image = imageio.v2.imread("temp.png")

writer.append_data(image)

plt.close(fig)