Smarter A/B Testing

powered by Reinforcement Learning

Prerequisites

- A/B testing: A method for comparing two variations to determine which is better

- Different from gradual rollout in canary releases

- In this session, we consider an example of comparing N design variations on an e-commerce site

Standard A/B Testing

- Randomly assign users to one of A, B, ... N

- Display a different design to each group

- Compare cart-in rates after a fixed period

Problems

- How to determine the test duration (1 week? 1 month?)

- Potential for losses during the test period -> Displaying a poor design worsens conversions

Example

When redesigning a product detail page:

- A: Existing design (cart-in rate: 5%)

- B: New design (cart-in rate: ??)

Example

Potential losses from A/B testing in this case:

- If B is better: A longer A/B test delays migration to B

- If A is better: A higher proportion of B increases losses

Solutions

- How to determine the test duration

- -> End when the required number of samples has been collected

- Potential for losses during the test period

- -> Display the better design at a higher rate

Reinforcement Learning

The Exploration-Exploitation Dilemma

- Exploration: Try new designs

- Exploitation: Display the current best design

-> Exploration carries risk, but exploitation alone brings no improvement

Multi-Armed Bandit Problem

- N slot machines

- Each has a fixed winning probability

- Want to find the machine with the highest winning probability in the fewest trials

Multi-Armed Bandit Problem

In terms of A/B testing...

- N designs

- Each has a fixed cart-in rate

- Want to find the design with the highest cart-in rate in the fewest impressions (shortest test period)

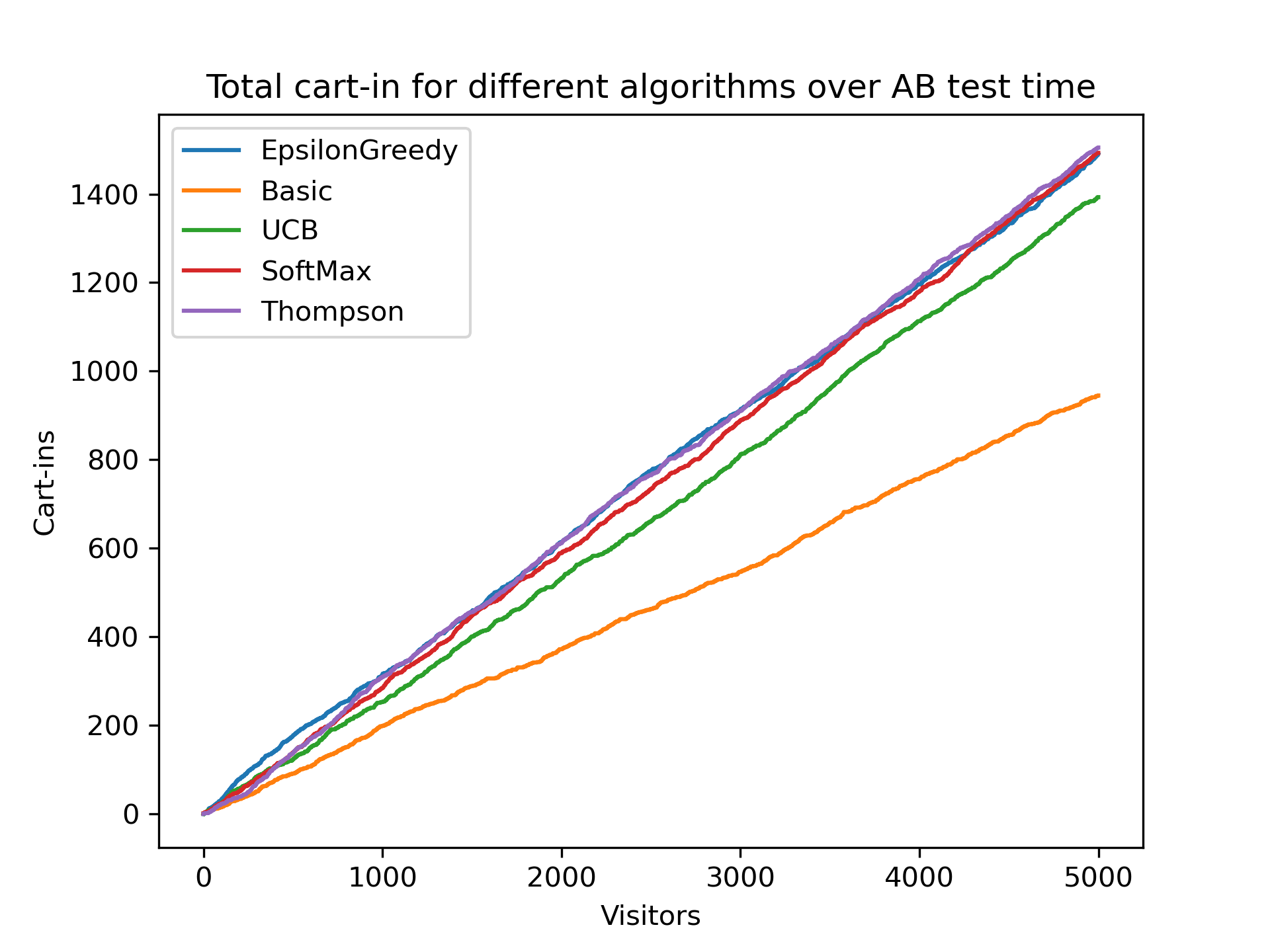

Bandit Algorithms

- The details are complex, so here is a quick overview

- Consider an A/B test for N designs

- CVR = cart-in rate

Bandit Algorithms

- Epsilon-greedy

- Softmax

- Thompson Sampling

- UCB1

How to Read the Graphs

- x-axis: Number of impressions (new visitors)

- y-axis: Proportion of time the best design was displayed

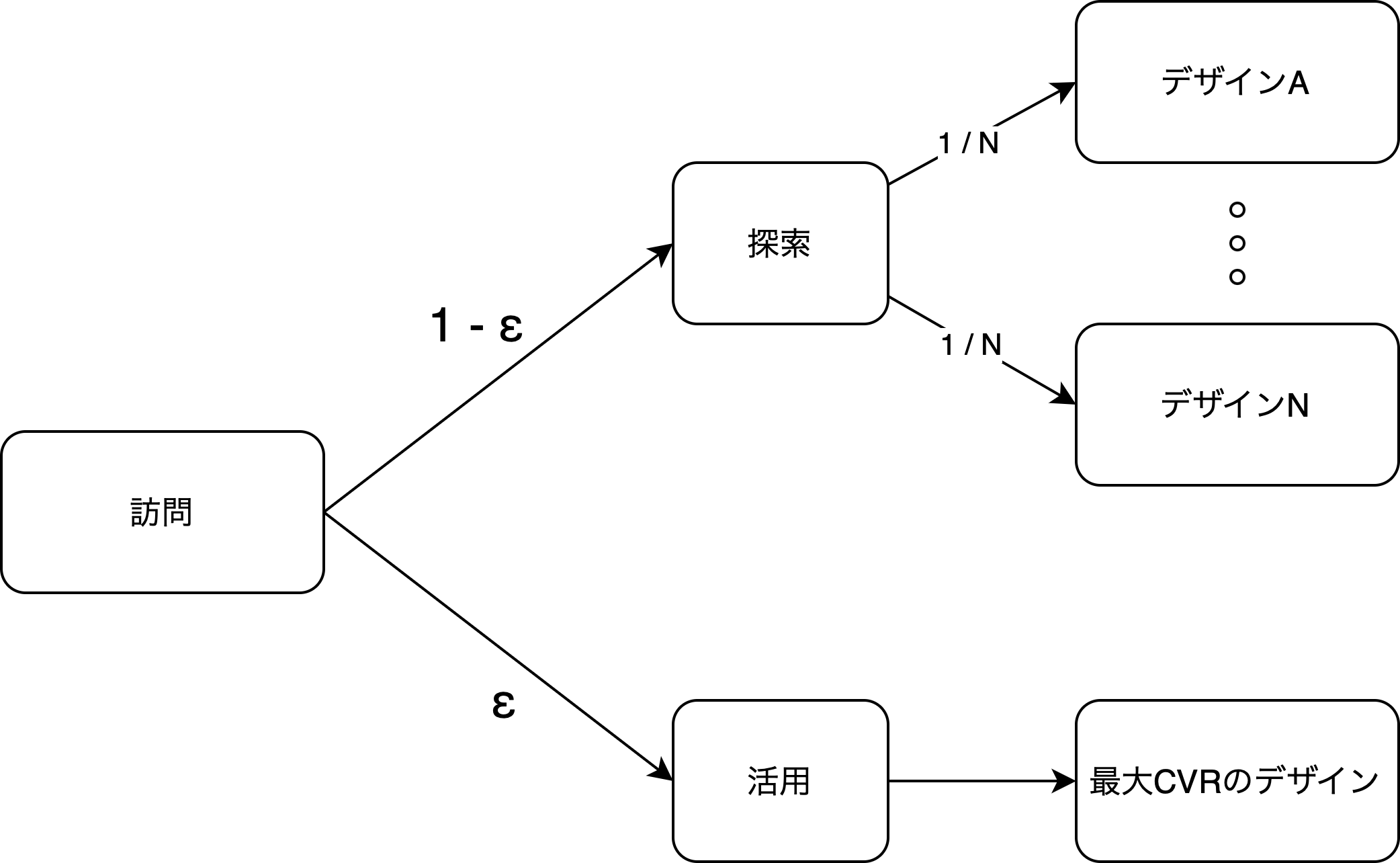

1. Epsilon-greedy

- With probability epsilon, explore

- Display a completely random design

- With probability 1-epsilon, exploit

- Display the current best design

- The best design is determined by the interim CVR of each design

- Epsilon is typically between 0.1 and 0.3

1. Epsilon-greedy

Epsilon-greedy

- Strong randomness

- Epsilon-dependent

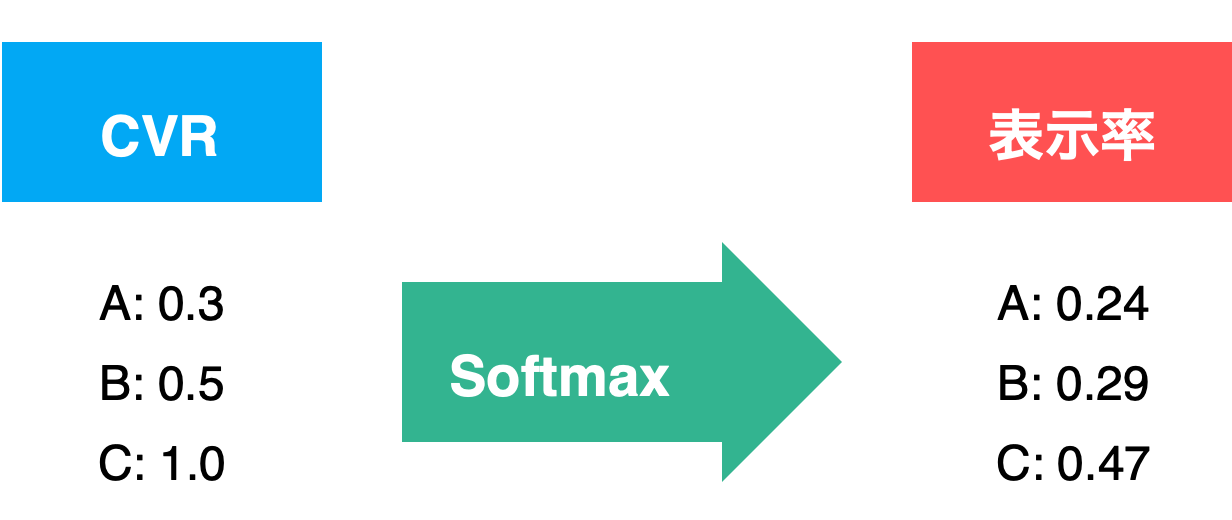

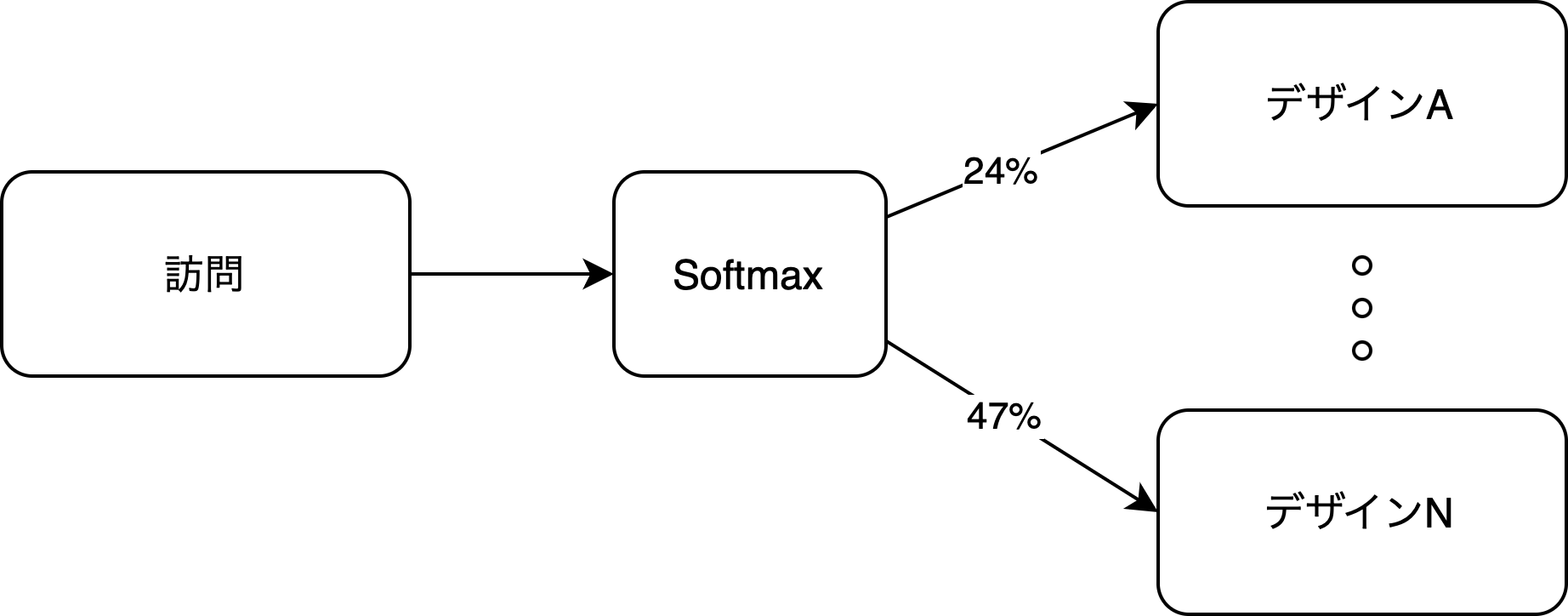

2. Softmax

- Selects designs probabilistically based on each design's interim CVR

- Designs with higher CVR have a higher probability of being selected

- Reduces wasteful exploration

2. Softmax

- A function that converts any collection of numbers into probabilities

2. Softmax

- Epsilon-greedy tests all designs equally

- Softmax preferentially tests designs with higher expected values

Softmax

- Fast convergence

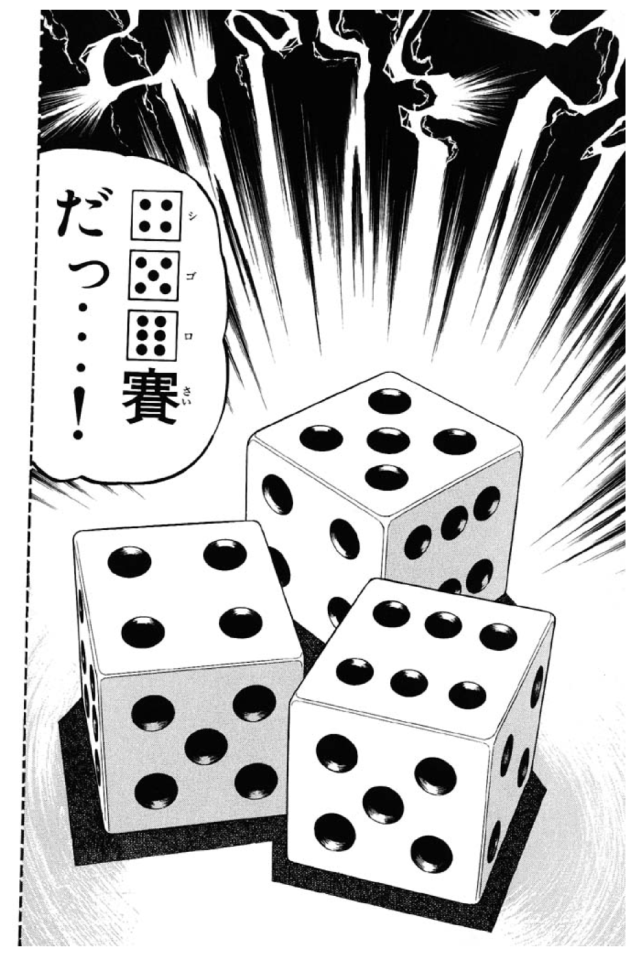

3. Thompson Sampling

- Estimates the CVR for each design using Bayesian estimation to create distributions, then samples to select a design

- Overcomes the weaknesses of Softmax

- The serious approach used by SEO companies and the like

3. Thompson Sampling

- Create a loaded die for each design based on its CVR

- Designs with higher CVR get better dice

- Roll the dice and select the design with the highest roll

- Update the CVR and dice after each design display

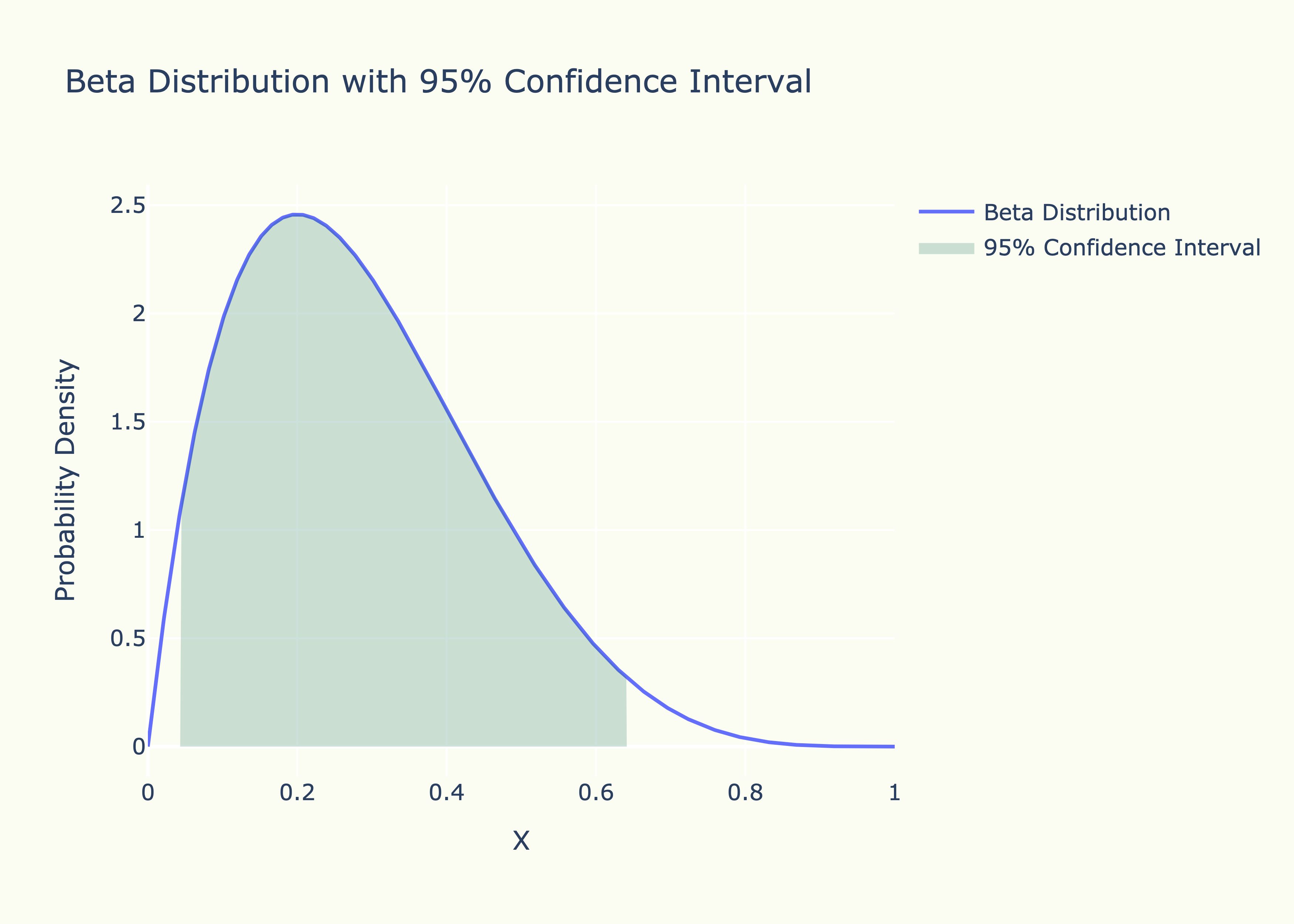

3. Thompson Sampling

- Bayesian estimation will be covered another time

- Softmax treats and as equal CVRs, but the latter is less likely to deviate from

- Thompson Sampling produces different dice behavior even for the same CVR when the number of trials differs (Beta distribution)

Thompson Sampling

- Slower convergence

- Higher stability

4. UCB1

- Selects designs using confidence intervals

- No randomness

- Overcomes the weaknesses of Softmax

4. UCB1

- Confidence interval: The range in which the true value of a prediction is likely to fall

- Compute the confidence interval of the CVR for each design and display the design with the highest upper bound

UCB1

- More wasteful exploration

- No tuning required

What About Losses?

How much cumulative conversion was gained compared to displaying A/B at 50% each as in standard A/B testing?

Advantages of Bandit

- Converges to the optimal design fastest

- Can be simulated in advance

- Required sample size (duration) is known

- Expected losses are known

Disadvantages of Bandit

- Requires integration with data sources

- Does not converge when there is no difference in CVR (becomes standard A/B testing)

- Requires parameter tuning

Summary

- Problems with conventional A/B testing

- How to determine the test duration

- Potential for losses during the test period

- A/B testing with reinforcement learning

- Shortest test period

- Continuously displays the optimal design

Next time, we will introduce

Component-level CVR Impact Analysis

using logistic regression